Last Updated on March 19, 2026

Welcome. You’re about to get a clear, practical map for using software to help run teams and tasks without losing sight of people.

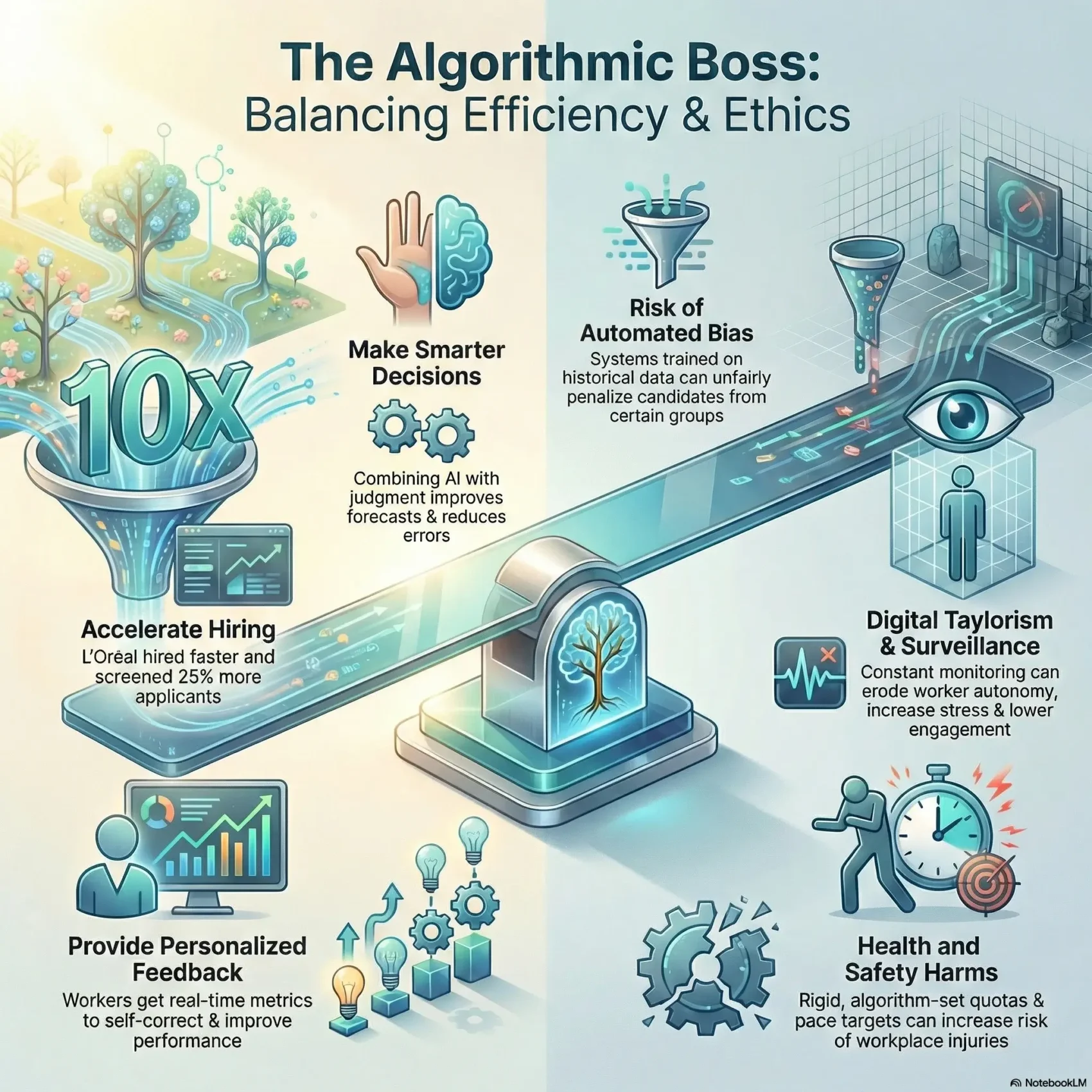

Algorithmic management began on platforms like Uber, Deliveroo, and Upwork and now reaches into traditional organizations. Companies such as L’Oréal used artificial intelligence to speed hiring tenfold and screen 25% more applicants by automating early steps.

You’ll learn how data and tools can boost speed, consistency, and scale while also creating risks like bias, opacity, and trust gaps. The guide shows what this shift means for your managers, your workers, and your business choices.

U.S. policy is changing fast. New York moves toward anti-bias audits and Illinois limits video interview analysis. Proposals like the Stop Spying Bosses Act and NLRB guidance highlight how surveillance affects worker voice.

Key Takeaways

- You’ll get a practical map to use data and AI while protecting people and fairness.

- See why companies shift decisions into software and what it means for managers and workers.

- Learn the essential questions to ask before adopting new systems.

- Understand where tools add value and where they raise risk.

- Get a quick view of U.S. policy that affects how you design processes.

Algorithmic management

Data-driven tools are reshaping how teams are scheduled, assessed, and coached on the job.

What it is and why it matters right now

In plain terms, algorithmic management is the strategic tracking, evaluating, and steering of workers using software that takes on tasks once handled by people.

Early research by Lee, Kusbit, Metsky, and Dabbish (2015) showed how platforms like Uber and Lyft used algorithms to allocate and evaluate work. That research helped spot patterns now moving into traditional organizations.

- Prolific worker data collection and surveillance that captures location, speed, and outcomes.

- Real-time responsiveness that routes tasks or updates schedules instantly.

- Automated or semi-automated decisions that speed routine choices and free managers for judgment calls.

- Ratings and metrics used to evaluate performance and guide feedback.

- Nudges or penalties that shape behavior, from reminders to incentives.

“These systems change who makes routine decisions and how information flows across work.”

As a manager or HR leader, watch how these practices pair with human judgment. Learning systems improve with data, and clear transparency builds trust with your teams.

How algorithmic management works across platforms and traditional organizations

Across gig apps and enterprise tools, data flows from devices into decision engines that assign tasks and shape daily work. Systems ingest operational and behavioral data via apps, sensors, and software so you can make faster, consistent choices at scale.

From data collection and surveillance to real-time decisions

Apps and devices capture location, timestamps, and activity logs. That information feeds models and rules that assign tasks, route work, or trigger alerts in real time.

Platform examples: Uber uses dynamic dispatch, pricing, and driver ratings. Traditional firms apply CV analysis, NLP on written responses, and video signals to shortlist candidates and populate dashboards.

Ratings, nudges, and automated performance feedback in practice

Deliveroo sends monthly reports showing metrics like time to accept orders and travel time. These ratings become nudges: leaderboards, badges, or threshold alerts that change behavior.

- Trace the pipeline: collection → surveillance mechanisms → decision engine so you see how information powers decisions.

- Decide which tasks suit automation and which should remain with managers using a simple rubric: repeatable, high-volume, low-context tasks are best automated.

- Watch system latency and time-to-decision trade-offs: instant prompts speed action but can harm experience if poorly tuned.

“Design nudges to support performance improvement, not to punish.”

Finally, focus feedback on actionable metrics and avoid metric fixation. Good tools summarize complex signals for you, helping coordinate teams while keeping human accountability clear.

Where it came from: Gig platforms to enterprise HR

The first big cases came from ride-hail and delivery apps, where software routed tasks, set incentives, and scored workers in real time.

2015 research on Uber and Lyft showed how systems allocated rides, optimized pricing, and evaluated drivers. Those early experiments proved the playbook: collect large datasets, tune models, and automate routine decisions.

Over time, the same ideas moved from gig platforms like Deliveroo and Upwork into companies and traditional organizations. HR teams now use data to screen candidates, schedule shifts, and monitor performance.

That shift didn’t appear overnight. Digital direction of labor traces back to manufacturing in the 1970s. Modern cloud tools, cheaper compute, and abundant data made these systems practical for any size organization.

- You’ll see why gig-era playbooks scaled into enterprise HR—and which parts translate cleanly.

- Learn how job structures and labor expectations changed as signals began guiding daily work.

- For a quick overview of current trends in the gig economy, check this gig economy trends.

“Early platform cases offer useful lessons, but copying tactics without adaptation risks harm.”

The upside: Efficiency, better decisions, and personalized feedback

Smart tools can turn slow hiring queues into fast, predictable pipelines that free managers to make higher-value choices. When you let software handle repeatable work, your team gains time to focus on fit, coaching, and strategy.

Boosting productivity and throughput in hiring and staffing

Algorithms can screen far more resumes per hour than a single recruiter. L’Oréal reported hiring 10x faster and interviewing 25% more applicants after deploying an artificial intelligence solution based on computational linguistics.

That speed cuts time-to-decision and raises productivity without losing quality when you set clear metrics and guardrails.

Combining algorithms with manager judgment for smarter decisions

Studies show pairing models with human intuition improves staffing forecasts and reduces unstable schedules. Use tools to surface signals, then let a manager weigh context before final calls.

- Use data to flag high-volume, repeatable tasks for automation.

- Keep human review for edge cases and culture fit.

- Phase rollout so learning improves outcomes over time.

- Translate efficiency gains into faster fills and better matches.

Personalized insights for employees and remote teams

Companies like Deliveroo give workers targeted metrics—acceptance time and travel time—that let people self-correct in real time.

“Personalized feedback helps workers improve without heavy-handed surveillance.”

In short, when algorithms and management work together, you get faster throughput, clearer decisions, and real-time performance signals that boost engagement and business results.

The downside: Fairness, transparency, and wellbeing risks

When software that shapes work decisions hides its logic, fairness and wellbeing can suffer. Models often reflect past choices: if prior hires excluded groups, the tool may downgrade similar candidates and reproduce bias.

Many systems act as black boxes. That makes it hard to explain a decision or assign accountability when a worker is penalized.

Bias, black boxes, and accountability gaps

Research shows biased training data creates unfair outputs. Audit data, surface features, and correct patterns before they harm people.

Surveillance, autonomy, and “digital Taylorism” concerns

Surveillance spiked during the pandemic: keystroke tracking and screenshots became common. Such practices can erode autonomy, chill protected activity, and lower engagement.

Worker health, safety, and scheduling harms

Rigid quotas and pace targets increase injury risk, especially in logistics. One concrete example: hospitality room assignments that ignore task nuance raised physical strain for housekeepers.

- You’ll spot fairness issues when historical data skews outcomes and audits can fix them.

- Balance surveillance with guardrails: clear goals, adjustable nudges, and human override.

- Prepare escalation paths and transparent communications so trust can be rebuilt quickly.

“When people cannot see or challenge a decision, trust and safety suffer.”

Your role as a manager or HR leader in an algorithmic workplace

Managers who blend tool-based recommendations with human judgment get better outcomes. You must build new competencies so software helps rather than harms. This means clear ethics, practical data skills, and steady change leadership.

New skills: data literacy, ethics, and change leadership

Start small: teach your managers how to read basic model outputs, spot anomalies, and ask the right questions. Combine those skills with ethical reasoning so decisions respect fairness and worker dignity.

Augmentation vs. automation: choosing the right level of control

Adopt an augmentation-first approach that keeps critical decisions with a manager and lets software offer recommendations. Set thresholds, escalation paths, and simple practices for documenting when you follow or override a recommendation.

- Train with short learning sprints and scenario exercises to build judgment.

- Embed recommendations into workflows so decisions are timely and defensible.

- Measure adoption and decision quality, then refine your approach from the results.

“Pair algorithm recommendations with human review to improve outcomes and trust.”

Current U.S. policy and regulation you should watch

Federal and state actions now push organizations to explain what they collect, why, and how workers can contest decisions.

Stop Spying Bosses Act and the NLRB’s surveillance stance

Stop Spying Bosses Act: the bill would require clear disclosures, limit worker data collection, and create a Privacy and Technology Division at the U.S. Department of Labor. That could force new processes for what information you capture and share.

NLRB guidance: the General Counsel warns that broad monitoring can interfere with protected labor activity. Design controls so monitoring does not chill organizing or other rights.

White House AI Bill of Rights and state-level moves

The White House blueprint calls for notice, explainability, and safe systems where artificial intelligence touches hiring and evaluation.

States are moving too: New York pushes anti-bias audits for recruiting tech, while Illinois limits video interview analysis. These rules shape how your hiring pipelines use data and models.

What the EU’s Platform Work Directive signals for U.S. companies

The EU wants algorithm transparency, worker data access, and contestability. Treat this as a case to build similar protections into U.S. programs now.

- You’ll map disclosures, worker access, and repeatable processes to comply without slowing operations.

- You’ll calibrate control to avoid chilling rights and separate productivity tools from surveillance.

- You’ll brief executives so companies can invest early in compliant data practices and management governance.

“Design policies that give workers clear access to information and a path to challenge decisions.”

Responsible implementation: Strategy, change, and continuous evaluation

Begin implementation in processes that repeat the same tasks daily and produce large, reliable datasets. Start small so you can prove value and reduce risk.

Start where work is standardized and high-volume

Pick areas with clear inputs and outputs—resume screening, routine scheduling, and simple routing are good examples.

These areas let a new system show quick wins in efficiency and productivity while keeping stakes low.

Communicate, include, and train to build trust

Transparent communication shapes perception. Explain purpose, limits, and who is accountable.

Include workers in pilots and listening sessions so you surface edge cases before scaling.

Measure outcomes and create channels for worker feedback

Track efficiency, quality, and experience with clear metrics. Set thresholds to pause or revise models that underperform.

Offer always-on feedback channels so employees can report issues and suggest fixes in real time.

Governance: audits, transparency, and human-in-the-loop

Put governance in place—regular audits, documented decisions, and human review for high-impact outcomes.

- Prioritize areas with plentiful data and repeatable tasks.

- Design change plans that clarify boundaries for managers and staff.

- Train teams on tools and ethical scenarios so real decisions stay thoughtful.

“Treat implementation as a living program: iterate based on research, evidence, and worker input.”

Real-world contexts: Hiring, scheduling, and gig work examples

Real workplace examples show how hiring tools, scheduling systems, and gig platforms change daily operations and worker experience.

Recruiting and selection: screening and interview analytics

In hiring, companies use algorithms to screen CVs and apply NLP to written responses. Video interview analysis appears in some tools but is now subject to laws like Illinois’s AI Video Interview Act.

Practical point: break the funnel into sourcing, screening, and assessment so you can focus controls where data-driven tools add the most value.

Workforce scheduling in retail, hospitality, and logistics

Forecasting tools predict demand and suggest schedules. Studies show combining those recommendations with manager judgment reduces unstable hours and improves outcomes.

Platform work: task assignment, quotas, and pay-setting

On gig platforms, task engines prioritize speed, skills, and geography. Benchmarks and quotas shape pay and behavior—Deliveroo’s reports on acceptance and travel time are one case in point.

- Break down where tools add value in the hiring funnel and comply with interview analytics rules.

- Use forecasting to staff the right time while avoiding harmful schedule instability.

- Layer human review on task assignment so context and fairness catch what models miss.

- Measure meaningful performance: quality, throughput, and candidate experience—not just easy signals.

“Set fair time benchmarks and explain why a candidate advanced or a shift was assigned.”

Blend algorithmic management with clear escalation paths so your managers can adjust when real conditions change. Use data and worker input to calibrate targets and build trust.

The future of work: AI, data power, and worker voice

Access to clear information will decide whether the future favors workers or concentrates control with employers.

Shifting power dynamics through data access and transparency

When you give workers access to the same data you use, information asymmetries shrink.

Transparency reports, explanation summaries, and clear disclosures about systems and inputs rebalance power.

These steps let workers see why a decision happened and raise concerns early.

Worker organizing, whistleblowing, and collective rights

Worker-led action has already exposed harms from quotas and surveillance in the gig economy.

That pressure shaped policy responses and company choices.

- Provide appeal channels: let workers contest and correct data-driven outcomes.

- Design for autonomy: configurable nudges and opt-in visibility reduce coercive control.

- Protect collective rights: avoid practices that chill organizing or expose organizers.

“Treat transparency and worker voice as strategic advantages, not compliance costs.”

By expanding data access, improving information flows, and aligning with research-backed practices, your organization can compete for talent while supporting fair work in a changing economy.

Conclusion

, This guide leaves you with a practical roadmap to use data-driven tools while keeping people front and center.

You’ll see how algorithmic management can lift efficiency and productivity when you pair systems with clear governance, training, and human review.

Follow a simple approach: pick areas with repeatable work, ask the right questions about data and explainability, and set outcome metrics that include fairness and safety.

Track results over time, build appeal paths, and keep managers responsible for final decisions so companies and organizations can scale benefits while protecting workers.

Use these recommendations to brief leadership and prepare your business for policy shifts that demand transparency and contestability.