You’ll get a friendly roadmap you can use right away to guide decisions when you design and deploy systems. This article distills converging ideas from Asilomar, Montreal, IEEE, IBM, the EU Trustworthy AI guidelines, OECD, and others into clear steps you can follow.

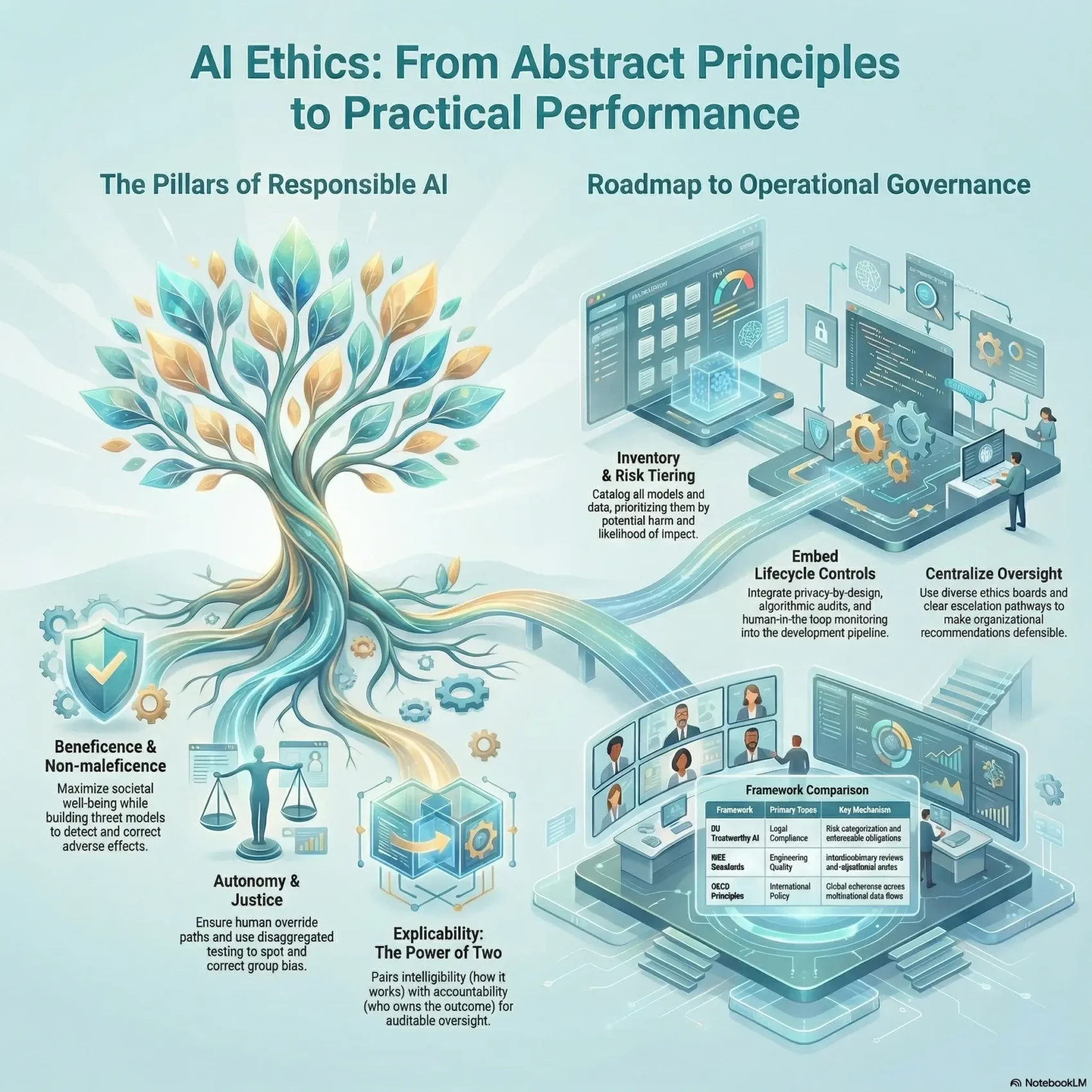

This piece explains why principle proliferation happened and how experts synthesized common principles into five core values: beneficence, non‑maleficence, autonomy, justice, and explicability. You’ll see how those ideas link to business outcomes like safety, societal impact, and trust.

Along the way, you’ll learn practical governance approaches, lifecycle controls championed by IBM, and how the OECD and EU align on inclusivity, transparency, and accountability. The goal is to help you turn abstract ideals into roles, tests, and KPIs that match your organization’s values and the realities of working with artificial intelligence.

Key Takeaways

- Get a practical roadmap built from leading sources and real governance models.

- Understand the five core principles that anchor fair decision-making.

- See how explicability ties intelligibility to accountability in systems.

- Compare IEEE, EU, and OECD guidance to choose what fits your context.

- Find steps to move from principles to measurable practices and KPIs.

What you’ll learn in this Ultimate Guide to responsible AI

This section lays out what you’ll learn: hands‑on practices, governance examples, and ways to measure trust in real projects.

You’ll see how IBM’s lifecycle approach assigns roles, training, processes, and tools to improve trustworthiness across design, build, and operations.

The EU guidance stresses lawful, ethical, and robust systems at every stage, while OECD updates push for international policy coherence so adoption across applications is smoother.

“Practical governance closes the gap between policy and daily work.”

- Practical practices: steps from problem framing to post‑deployment oversight you can use today.

- Comparisons and use cases: clear ways to apply leading guidelines to content generation, recommendations, and risk scoring.

- Governance and training: examples that boost adoption without slowing innovation and show measurable benefits.

- Procurement and evidence: repeatable checklists for third‑party models and audit‑ready documentation of decisions.

By the end, you’ll understand the benefits of aligning systems with your organization’s values and stakeholder expectations, plus concrete ways to prioritize projects with the largest impact.

Defining an AI ethics framework and why it matters now

Turning high-level principles into concrete tasks makes responsible system design achievable for teams.

Analyses of leading initiatives converge on five core principles: beneficence, non‑maleficence, autonomy, justice, and explicability. Explicability pairs intelligibility—how a model works—with accountability—who owns outcomes. That pairing helps you turn abstract values into testable requirements during development and deployment.

From principles to practice: turning values into system requirements

Define the framework as the bridge between policy and code. Use it to map principles into acceptance criteria you can build, test, and monitor.

- Make values measurable: convert fairness and privacy into thresholds for model metrics and data handling checks.

- Assign responsibility: document who reviews decisions and who signs off on releases.

- Context matters: requirements for credit, clinical triage, or moderation will differ even with the same principles.

- Operational controls: dataset documentation, model cards, and monitoring thresholds link business implications to technical steps.

Finally, build a shared language so product owners, engineers, and legal align on what “responsible” means for your system.

The problem of principle proliferation and how to navigate it

A crowded field of principle statements has left many teams unsure which guidance to follow. Researchers cataloged 47 separate principles across six major initiatives and found broad overlap. The risk was redundant repetition or confusion that slowed policy, standards, and best practices.

Start by accepting that overlap is normal. Then use a simple normalization step: map similar terms to a short list of core values. A synthesized set of five principles provides a coherent basis for future policies and recommendations.

- Adopt a meta‑map to trace internal controls back to external recommendations so reviews aren’t duplicated.

- Use a standardized intake process to turn stakeholder concerns into concrete requirements and avoid “principle shopping.”

- Keep a living register of applicable principles and update mappings as new guidance appears.

- Prepare concise summaries for executives while keeping detailed mappings for risk and compliance teams.

For a hands‑on approach to decision processes and linking policies to operations, see this practical guide to decision making. It helps your stakeholders align around fewer, clearer statements and move from theory to measurable action.

The five core principles that anchor ethical AI

At the heart of responsible design are five core principles that guide choices from data collection to deployment. These values help you translate high‑level aims into tests, roles, and measurable outcomes.

Beneficence and non‑maleficence: maximizing benefit, minimizing harm

Beneficence focuses on well‑being, the common good, and sustainability. You’ll operationalize it by linking expected benefits to measurable outcomes and noting who gains.

Non‑maleficence highlights privacy, security, and avoidance of harm. Build threat models for privacy, safety, and misuse, and add controls that detect and correct adverse effects early.

Autonomy and justice: human agency, fairness, and equity

Autonomy means keeping humans in charge of when and how to delegate decisions. Define clear override and rollback paths so you can restore human control quickly.

Justice requires that you measure fairness across affected groups. Correct bias in data and models and document the impact on different communities.

Explicability: intelligibility and accountability working together

Explicability fuses intelligibility with accountability to enable oversight. Build explanations or simpler models where needed and assign accountable owners for each decision path.

“Make these principles auditable: connect intent to outcomes with documentation, sign‑offs, and monitoring.”

- Align these core principles to product and risk goals so tradeoffs are clear.

- Make requirements auditable with test evidence, owners, and monitoring records.

- Use these values to drive measurable improvements in fairness, autonomy, and reduced harm.

Comparing leading frameworks: IEEE, EU Trustworthy AI, and OECD

Understand how major guidance bodies align and where they push different priorities for real-world use.

At a high level they share a base: protection of rights, transparency, safety, and accountability. That overlap gives you common controls to adopt across products.

Where they converge

- Human rights and fairness as primary goals.

- Clear expectations for explainability and monitoring.

- Safety, robust testing, and documented sign‑offs for releases.

Key differences in emphasis

The EU guidance stresses lawful, ethical, and robust systems with strong privacy and data governance controls. It links requirements to enforceable obligations.

IEEE takes an engineering‑first stance. It recommends interdisciplinary review boards, algorithmic audits, and adversarial testing as part of technical standards.

The OECD brings a policy and international lens. Its updates promote coherence across countries so multinational adoption is smoother.

Choosing the right reference model for your context

Map your controls to one reference model, note gaps, and prepare a short crosswalk for leadership. Pick the model that fits your regulatory exposure and risk profile, then align policies and rollout for steady adoption.

Governance that works: boards, policies, and processes you can trust

Good governance turns lofty promises into repeatable decisions your teams can rely on. Start by centralizing oversight so reviews, standards, and decision rights are visible and consistent across projects.

Building an effective ethics board with diverse stakeholders

Create a clear board charter that states remit, membership criteria, decision rights, and meeting cadence. This helps you match oversight to organizational size and risk.

Include domain experts, impacted community voices, legal, security, and product leads so the board captures real-world perspectives.

Roles, responsibilities, and escalation pathways

Define named, accountable executives for product owners, model risk, legal, and security. When ownership is explicit, accountability follows.

Codify intake, review, approval, monitoring, and escalation steps so your team applies consistent processes and documents every decision.

- Design a board charter with clear remit and meeting cadence to support policies.

- Map roles and responsibilities to reduce overlap and speed approvals.

- Adopt practical tools for issue tracking, documentation, and audit trails.

- Build training so teams use repeatable practices and understand thresholds that trigger rollbacks.

“Centralized governance and diverse voices reduce blind spots and make your recommendations defensible.”

By aligning your program to external standards and using good tools, you improve operational trust. These steps give you concrete recommendations to show regulators, partners, and users how decisions were made.

Embedding ethics across the AI lifecycle

Make lifecycle steps the place where design choices meet practical controls and clear ownership. This keeps privacy, data quality, and accountability tied to real work instead of abstract policy.

Design and data: privacy, consent, and data governance

From day one, define consent patterns and data minimization rules. Document who can access datasets and why, and enforce role‑based access controls.

GDPR and CCPA raise the bar: provide clear notices, honor user rights, and record consent. These steps make transparency and compliance practical.

Development and testing: audits, robustness, and adversarial checks

Build development checkpoints that include model documentation, reproducibility evidence, and peer review. Require algorithmic audits before releases.

Run robustness and adversarial tests to find unexpected behavior. Use these tests to update risk registers and mitigation plans.

Deployment and monitoring: risk controls and human oversight

Set deployment controls so humans can review high‑stakes decisions and users can appeal outcomes. Create dashboards for drift, subgroup performance, and privacy alerts.

Tie alerts to named owners and documented processes so accountability is traceable. Make redress channels clear and timely.

- Design: consent, minimization, controlled data access.

- Development: documentation, reproducibility, peer review.

- Testing: algorithmic audits, robustness, adversarial checks.

- Deployment: human‑in‑the‑loop, monitoring, user redress.

“Connect design inputs to operational outcomes so explainability and accountability are auditable.”

Fairness, bias, and inclusion: preventing discriminatory outcomes

Preventing unfair outcomes starts with how you collect and manage the data that feeds decisions. Historical records can carry patterns that repeat past injustices. For example, Amazon’s scrapped resume screening tool showed how biased historical data can produce discriminatory results.

Dataset curation and impact assessment techniques

You’ll curate datasets intentionally, checking representation, labeling quality, and known skews that can seed bias into models. Run structured impact assessments to surface potential harm to specific groups and document mitigations before launch.

Continuous evaluation and redress mechanisms

Select fairness metrics that match your use case and monitor them continuously. Don’t treat a single pre‑release check as sufficient; set automated alerts for drift and subgroup performance.

- Accountability: assign owners for fairness monitoring and corrective actions with SLAs for investigation and remediation.

- Redress: create clear, user‑friendly pathways so people can contest outcomes and get timely resolutions.

- Stakeholder input: engage communities early to validate whether your fairness definitions match lived experience.

- Continuous learning: capture lessons learned and fold them into updated practices so improvements stick across releases.

“Intentional curation, ongoing audits, and clear redress make fairness operational and auditable.”

Transparency, explainability, and trust

Clear disclosures and plain‑language model notes help people make safe, informed choices about systems they use. Explicability pairs intelligibility with accountability, so explanations must show both how decisions arise and who owns them.

Model documentation, disclosures, and stakeholder‑aligned explanations

Produce concise model documentation that states goals, training data sources, limits, and common failure modes. Use plain language so end users, auditors, and partners all get actionable facts.

- Disclose when and why artificial intelligence is used, how human oversight works, and how to challenge outcomes.

- Match explanation methods to model types; prefer interpretable approaches for higher‑risk cases.

- Align disclosures to your organization’s values and applicable guidelines to build credibility and maintain trust.

- Assign owners for documentation quality and keep artifacts current as models evolve.

- Pair transparency with privacy controls so disclosures inform without exposing sensitive information.

“Make explanations readable and actionable so stakeholders can evaluate impact and raise concerns.”

Privacy and security by design in AI systems

Treat privacy and secure operations as product features that protect people and your reputation. Make these protections visible in requirements, roadmaps, and release criteria so teams build them from day one.

Safeguarding PII, access controls, and secure model operations

GDPR and CCPA require clear disclosures, user control, and strong protections. You should align notices and rights handling to those rules even if you operate beyond those jurisdictions.

- You’ll implement privacy by design with minimization, purpose limitation, and consent tracking across the full data lifecycle.

- You’ll enforce access controls with least privilege for datasets, models, and infrastructure, backed by logging and periodic reviews.

- You’ll deploy encryption in transit and at rest, plus key management and segregation of duties to reduce breach blast radius.

- You’ll operationalize policies for retention, deletion, and incident response tailored to your AI workflows and feature stores.

Secure operations also need hardened containers, secrets management, and continuous scanning. Test for leakage and membership inference to protect PII, trade secrets, and user trust. These steps make your systems safer and help you meet regulatory expectations.

“Designing strong controls early saves time, reduces risk, and reinforces user confidence.”

Foundation models and generative AI: new risks, new guardrails

Generative technologies expand what you can build, yet they raise clear questions about truth, ownership, and control.

Foundation models are large systems trained on vast, often unlabeled data with self‑supervision. They enable broad adaptation but also introduce serious risks like bias, false content (hallucinations), and reduced explainability.

Hallucinations, misuse, and content provenance

You’ll address hallucinations with retrieval augmentation, grounding, and human review for high‑consequence answers.

Reduce misuse with guardrails: content filters, rate limits, safety policies, and monitoring for abuse patterns.

Improve provenance by tracking sources, citing references when feasible, and labeling synthetic media and outputs to boost transparency.

Policy, copyright, and data sourcing transparency

Disclose data sourcing approaches and limits so customers know how training corpora shape behavior.

Expect divergent policies: lawsuits over false statements and copyright claims have emerged, and some countries vary on enforcement—Japan’s recent change shows that.

- Vet jurisdictions and document lawful bases for data use.

- Choose deployment (API, on‑prem, or private fine‑tune) to match security, privacy, and latency needs for your systems.

- Test defenses for prompt injection, exfiltration, and jailbreaks before scale‑up.

“Proactive provenance and clear policies are your best defense against misuse and legal surprises.”

The regulatory landscape: EU AI guidance and U.S. developments

Recent updates from the EU and OECD mean your compliance playbook needs clearer mapping to operational controls. The EU’s Trustworthy AI guidelines focus on autonomy, harm prevention, fairness, explicability, and strong data governance with human oversight.

The OECD’s 2024 update pushes international coherence across 47 countries, so you should expect cross‑border expectations to tighten. In the U.S., sectoral laws like CCPA and federal guidance remain in flux while regulators signal new requirements.

- Situate your program against EU guidance so vendor reviews and procurement match global standards.

- Track OECD principles to anticipate international alignment pressures on data flows and operations.

- Align internal policies and documentation now to ease future adoption and regulatory reviews.

- Map controls to standards and guidelines to speed partner assessments and audits.

“Prepare evidence of oversight and clear incident reporting to avoid surprises as rules tighten.”

Assess the implications for procurement, vendor management, and incident reporting in your specific context. Doing so reduces risk and helps you show regulators, partners, and society that your approach to responsible use is documented and verifiable.

Real‑world applications: healthcare, hiring, and public sector use

High‑stakes use cases teach clear lessons about risk, oversight, and public trust. You’ll read three examples where governance changed outcomes and where poor controls caused real harm.

Lessons learned from high‑profile incidents

Healthcare: radiology support tools helped clinicians catch findings faster, but only when governance required human review, dataset checks, and clear thresholds. Those controls reduced diagnostic risk and improved clinical workflows.

Hiring: a resume‑screening project was shut down after historical data caused gender bias in rankings. That incident shows how biased inputs lead to reputational loss and legal exposure.

Public sector: government systems face intense scrutiny on fairness and transparency. Community engagement and easy redress channels proved critical to maintain trust.

- Data curation and representative sampling to prevent entrenched bias.

- Human review for high‑stakes decisions, not full automation.

- Continuous monitoring for drift and measurable impact on people.

- Aligning application goals with organizational values like equity and dignity.

- Embed regulatory and stakeholder communication plans before launch.

“Practical practices—data checks, oversight, and monitoring—are what prevent harm in deployed systems.”

Standards, tools, and practices to operationalize your framework

To run safe systems at scale, pair living standards with tools that automate governance tasks.

Model cards, audits, and governance platforms

Model cards standardize documentation of purpose, datasets, metrics, limits, and evaluation methods. They make reviews faster and reduce guesswork for teams and partners.

- Independent audits probe fairness, robustness, leakage, and security. Use external reviews to catch blind spots and document fixes.

- Governance platforms centralize approvals, monitor models, and enforce lifecycle guardrails. Tools like IBM watsonx.governance help manage generative models and controls at scale.

- Define practices for dataset versioning, lineage tracking, and reproducibility so investigations are reliable and efficient.

- Align transparency artifacts to stakeholder needs and link them to accountable owners and sign‑offs. Add clear redress channels for end users.

As your framework matures, refine standards and tools, build playbooks for common patterns, and keep audit trails and roles current. This makes oversight practical and ensures ongoing accountability.

Your step‑by‑step roadmap to adopt an AI ethics framework

Start with a focused inventory so you know what models, data, and risks you already manage. This lets you prioritize the highest‑impact work and avoid wasting effort on low‑risk items.

Assess current state and prioritize high‑impact risks

Take stock: list models, data uses, owners, and known issues. Rank items by potential harm and likelihood so you focus on urgent development needs.

Implement controls, training, and change management

Put controls in place by risk tier: privacy, fairness, explainability, and robustness. Deliver short training so teams know how to apply practices in daily development.

Iterate with feedback loops and external review

Embed processes for approvals, exceptions, and escalation so governance helps rather than blocks delivery. Use post‑incident reviews, monitoring, and audits to refine solutions over time.

- Inventory models and data; prioritize by impact and likelihood.

- Align controls to business objectives and risk tiers.

- Train teams and publish practical recommendations and templates.

- Bring in stakeholders and external experts to close blind spots.

“Continuous feedback and outside review turn early controls into lasting improvements.”

Measuring impact: KPIs for fairness, robustness, and accountability

Define KPIs that map directly to your principles and the real risks your systems create.

Start with a compact set of measures you can report weekly. Track outcomes across groups so you see disparities and trends. Use confidence intervals to avoid false alarms and to show whether gaps are meaningful.

Measure resilience with stress tests, adversarial challenge rates, and mean time to recovery when performance degrades. Monitor privacy and security incidents closely: time to detect and time to remediate are leading indicators of operational risk.

- Fairness: compare outcomes across protected groups and log changes over time.

- Robustness: count adversarial hits, failure modes, and recovery times.

- Security & privacy: track incidents, detection latency, and fixes.

- Transparency: measure documentation coverage, explanation quality, and user comprehension.

- Accountability: record named owners, SLA adherence for investigations, and completion of corrective actions.

“Align KPIs to risk tiers so the most consequential systems get the tightest oversight.”

Report clear thresholds to leadership and trigger reviews when limits are crossed. This makes monitoring actionable and ties metrics back to governance, data controls, and the organizational goals you care about.

Engaging stakeholders: users, communities, regulators, and partners

Engage early and often so community concerns shape design choices from day one.

Make participation practical: co‑design with users and impacted groups to align features with real needs and shared values. Give clear notices and plain‑language documentation so people know when they have access and how decisions are made.

Be accountable in public. Share controls, test results, and improvement plans with regulators and partners to build trust. Follow OECD calls for international cooperation and the EU and IBM emphasis on transparent communication and human oversight.

Operational steps you can apply:

- Create accessible grievance and redress channels and publish how you handle recommendations and complaints.

- Publish concise disclosures and user guides that explain access, limits, and safeguards for non‑experts.

- Document policies for community feedback and show how input changed roadmaps to close the loop with society.

“Open dialogue and clear processes turn stakeholder input into lasting improvements.”

Conclusion

The evidence here shows how five shared principles map to concrete controls and real benefits.

You can pick a reference framework and translate it into governance, lifecycle practices, and clear documentation that teams use every day.

Measure what matters — fairness, robustness, privacy, and accountability — and set feedback loops so metrics drive improvement and faster adoption.

Equip your teams with templates, tools, and playbooks to make responsible choices the easy default. This boosts operational speed and builds lasting trust.

In this article you saw how converging principles lead to practical solutions. Now you’re ready to move from principles to implemented solutions that deliver real benefits while reducing risk for people and society.